Summary

- The enhanced SDK enables OpenAI to deliver a standardized environment for building reliable and scalable autonomous workers.

- New sandbox features provide a secure execution layer for agentic AI to perform complex code tasks safely.

- Advanced handoff protocols allow multiple specialized agents to collaborate seamlessly across different business departments and functions.

- Integration with frontier models ensures that every agentic AI deployment benefits from superior reasoning and long-term planning capabilities.

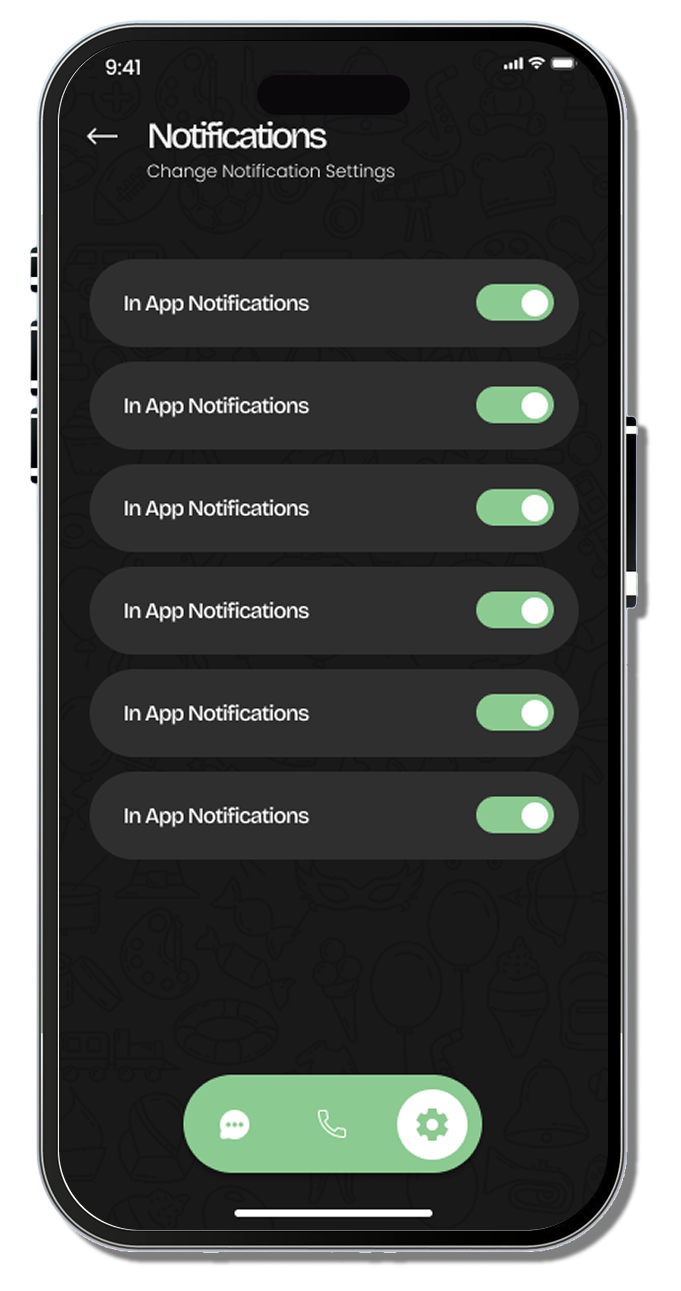

- Standardized guardrails help developers manage OpenAI systems by implementing human-in-the-loop triggers for sensitive enterprise operations.

The demand for agentic AI has skyrocketed as businesses seek to automate complex, multi-step workflows that require more than just text generation. On April 15, 2026, OpenAI officially launched a major evolution of its Agents SDK, introducing features designed specifically for the rigors of corporate environments. This update marks a transition from experimental “assistant” models to “executive” systems capable of handling long-horizon tasks with a level of reliability previously unseen in the field.

Building autonomous agents has historically been a fragmented process, often requiring developers to stitch together multiple frameworks while worrying about unpredictable model behavior. The new SDK addresses these “hallucination hurdles” by providing a standardized infrastructure. By centralizing the orchestration of models, tools, and data, the update allows developers to create specialized workers that can collaborate on large-scale projects. This progress follows a period of intense organizational growth, where the OpenAI leadership restructuring brings an expanded role for COO Brad Lightcap to align product development with enterprise-scale operational needs.

Frontier Models Support and Step-by-Step Adaptation

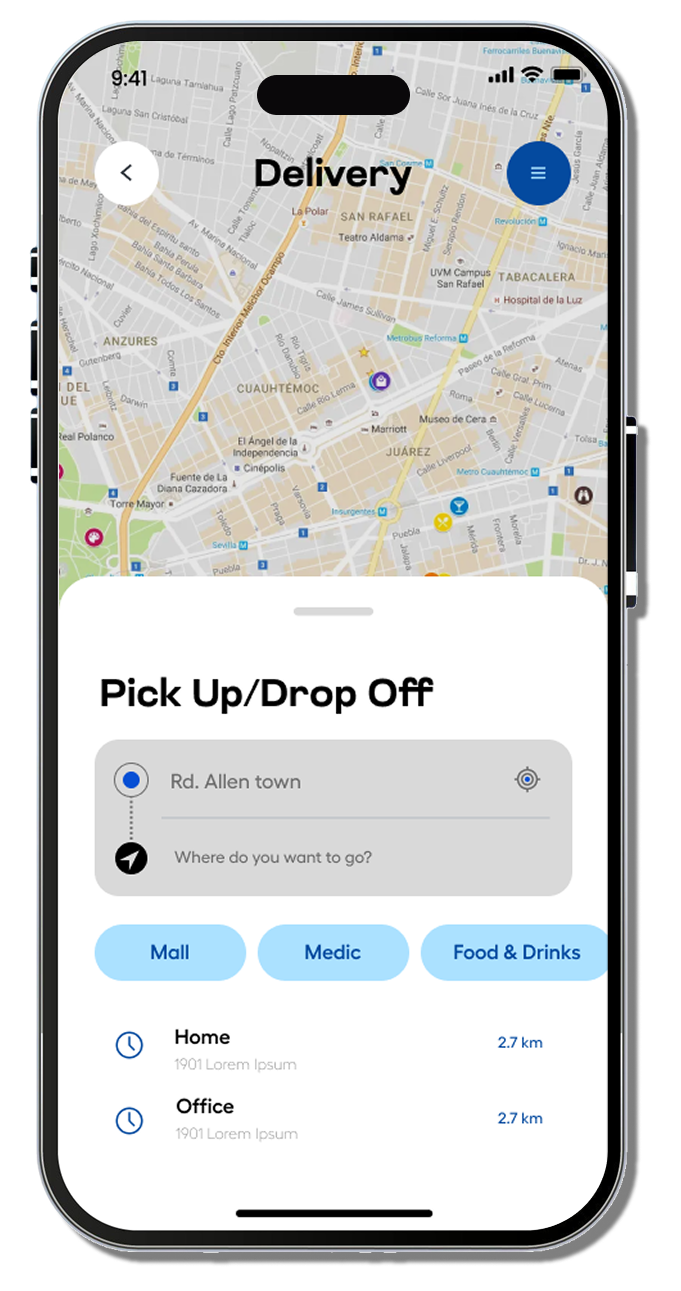

One of the most critical additions to the toolkit is the integrated sandbox environment. This feature acts as a “safety harness” for agentic AI, allowing code to execute in an isolated, container-based workspace. Enterprises can now deploy OpenAI models to work with sensitive files or system commands without risking the integrity of their core infrastructure. If an agent performs an unexpected action, the sandbox ensures the blast radius is contained within the secure workspace.

Furthermore, the SDK is now optimized for frontier models, including the reasoning-heavy O1 and the highly anticipated GPT-5 series. These models are inherently better at planning and self-correction, which are the hallmarks of successful agents. The infrastructure is designed to handle “long-horizon” tasks, meaning a process can run for hours or even days, pausing for human approval when necessary and resuming once a green light is given. This level of sophistication is supported by a massive influx of capital, as OpenAI raises from retail investors in a record-breaking funding round to sustain the immense compute requirements of these advanced agentic frameworks.

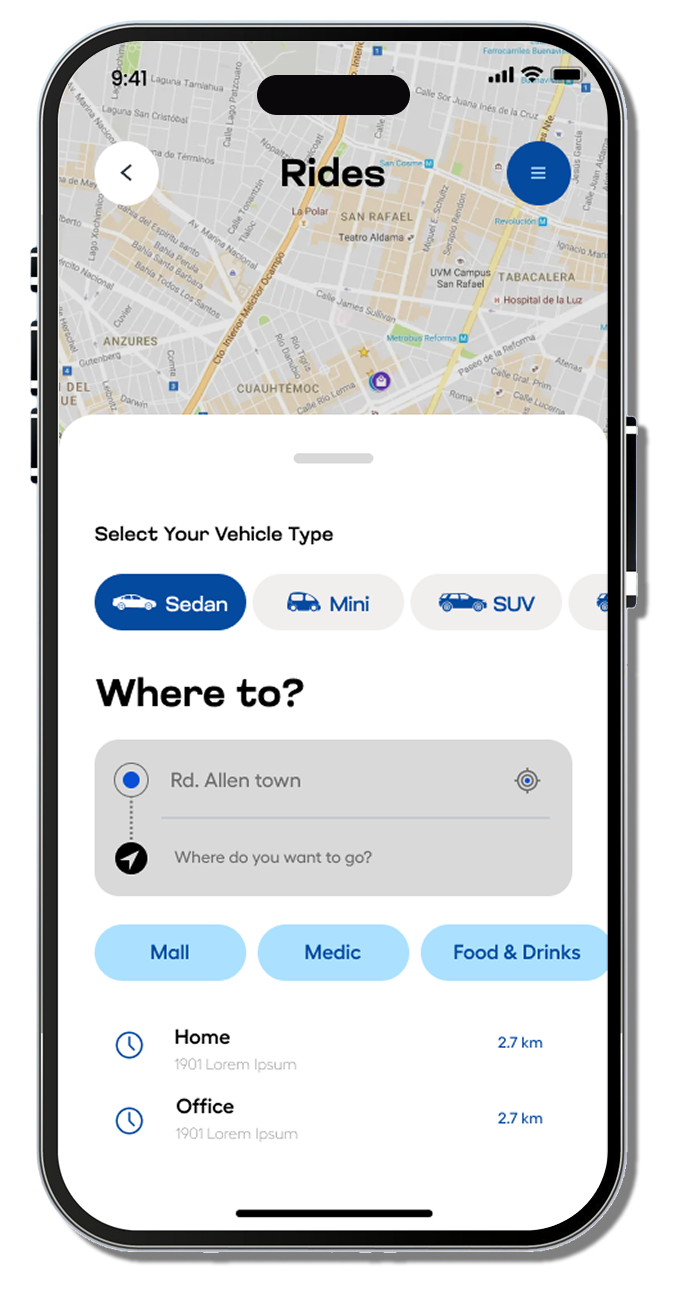

Developers are encouraged to follow a step-by-step adaptation path:

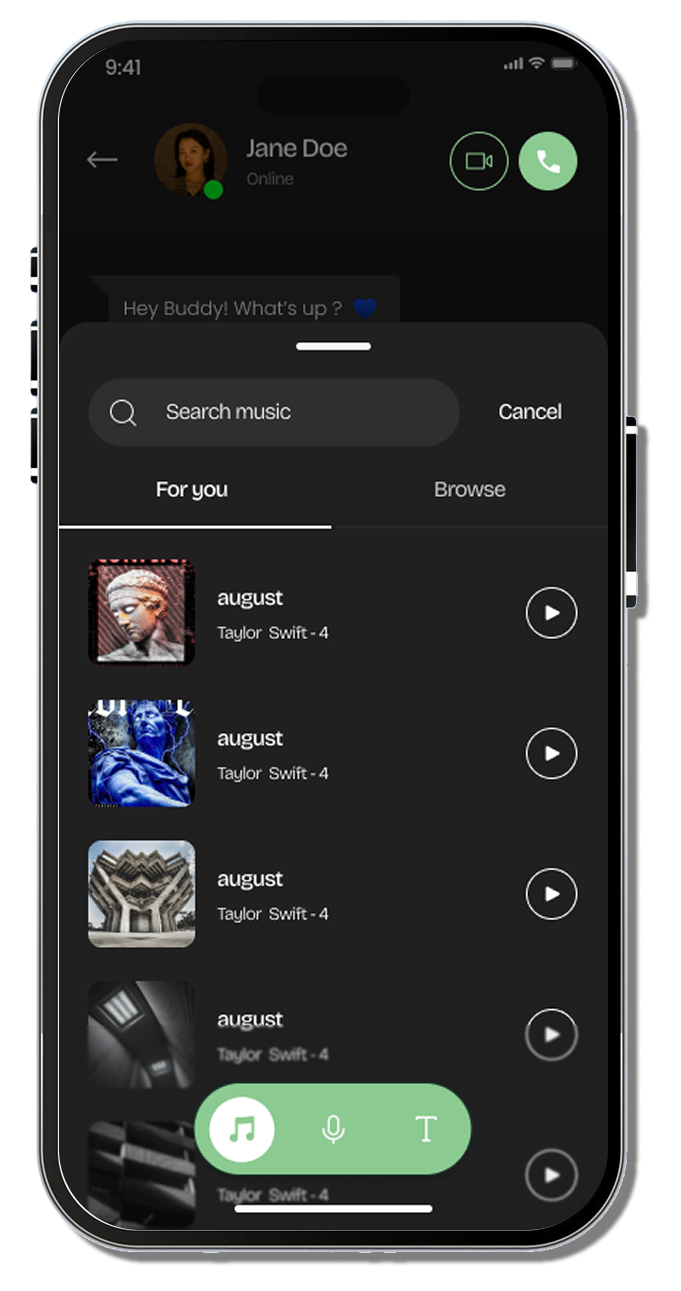

- Quickstart: Defining a single specialist to handle one specific function.

- Orchestration: Setting up handoffs between multiple agents for complex cross-departmental tasks.

Guardrails: Implementing human-in-the-loop triggers for high-risk operations.