Summary

- Matt Garman solidifies AWS as the leading infrastructure provider for the next generation of Enterprise AI solutions.

- Massive investments in Anthropic and OpenAI ensure platform neutrality and eliminate single-provider lock-in for global businesses.

- The strategy centers on providing a diverse toolbox where Enterprise clients can leverage both Anthropic and OpenAI models.

- AWS hardware synergy through Trainium chips allows Anthropic and OpenAI to scale frontier intelligence with high efficiency.

- Matt Garman emphasizes that backing both Anthropic and OpenAI positions AWS to dominate the future of agentic technology.

Digital Software Labs tracks the rapidly shifting landscape of artificial intelligence, where traditional boundaries between partnership and competition are dissolving. In a landmark session at the HumanX conference in San Francisco, AWS CEO Matt Garman directly addressed the industry’s curiosity regarding Amazon’s massive financial commitments to two of the world’s most prominent AI rivals. Following an $8 billion investment in Anthropic, Amazon recently made headlines with a staggering $50 billion commitment to OpenAI. For many analysts, backing two “enemy companies” whose leaders have historically clashed seems like a strategic paradox. However, Garman frames this as a calculated move to ensure that AWS remains the primary infrastructure provider for the next generation of Enterprise technology.

The decision to fund both organizations is not merely about financial returns; it is a defensive and offensive maneuver in the ongoing “Cloud Wars.” By securing deep ties with both Anthropic and OpenAI, Amazon ensures that its customers have access to the most advanced models available, regardless of which firm holds the lead at any given moment. This strategy positions AWS as a neutral ground where Enterprise clients can build versatile applications without being locked into a single provider’s ecosystem. The move also counters the influence of Microsoft, which had previously been the exclusive cloud home for OpenAI’s frontier models.

Why it matters

The stakes in the AI race have reached a level where access to “frontier” intelligence is considered a matter of survival for cloud providers. As Matt Garman explained, having these models available on AWS was a competitive necessity. Before this massive investment, both the Claude series and GPT models were already integrated into Microsoft Azure, giving Amazon’s primary rival a significant advantage in attracting high-value Enterprise contracts. For developers and businesses, this news signals a shift toward a multi-model future where the ability to switch between different AI “engines” is more valuable than loyalty to a single brand.

This development also highlights the changing nature of corporate competition in the 2020s. We are entering an era where companies must simultaneously collaborate and compete, a dynamic often referred to as “co-opetition.” When Matt Garman spoke about the $50 billion investment, he noted that managing such conflicts is part of the company’s DNA. This trend is mirrored elsewhere in the market, such as when OpenAI launches open-source tools for teen safety to set industry standards that even its competitors might eventually adopt. The focus is no longer on winning a single segment but on owning the infrastructure that powers every segment.

The details

During the conference, the AWS chief provided context on how the company manages the friction of being a primary partner to two fierce competitors. He emphasized that AWS has a long history of hosting partners that are also rivals. For instance, even Oracle, one of Amazon’s oldest and most direct cloud competitors- offers its database services on the AWS platform. This precedent allowed Garman to dismiss concerns about a conflict of interest, stating that the company has built “skills needed to work alongside partners” while ensuring that its own internal products do not receive an unfair advantage.

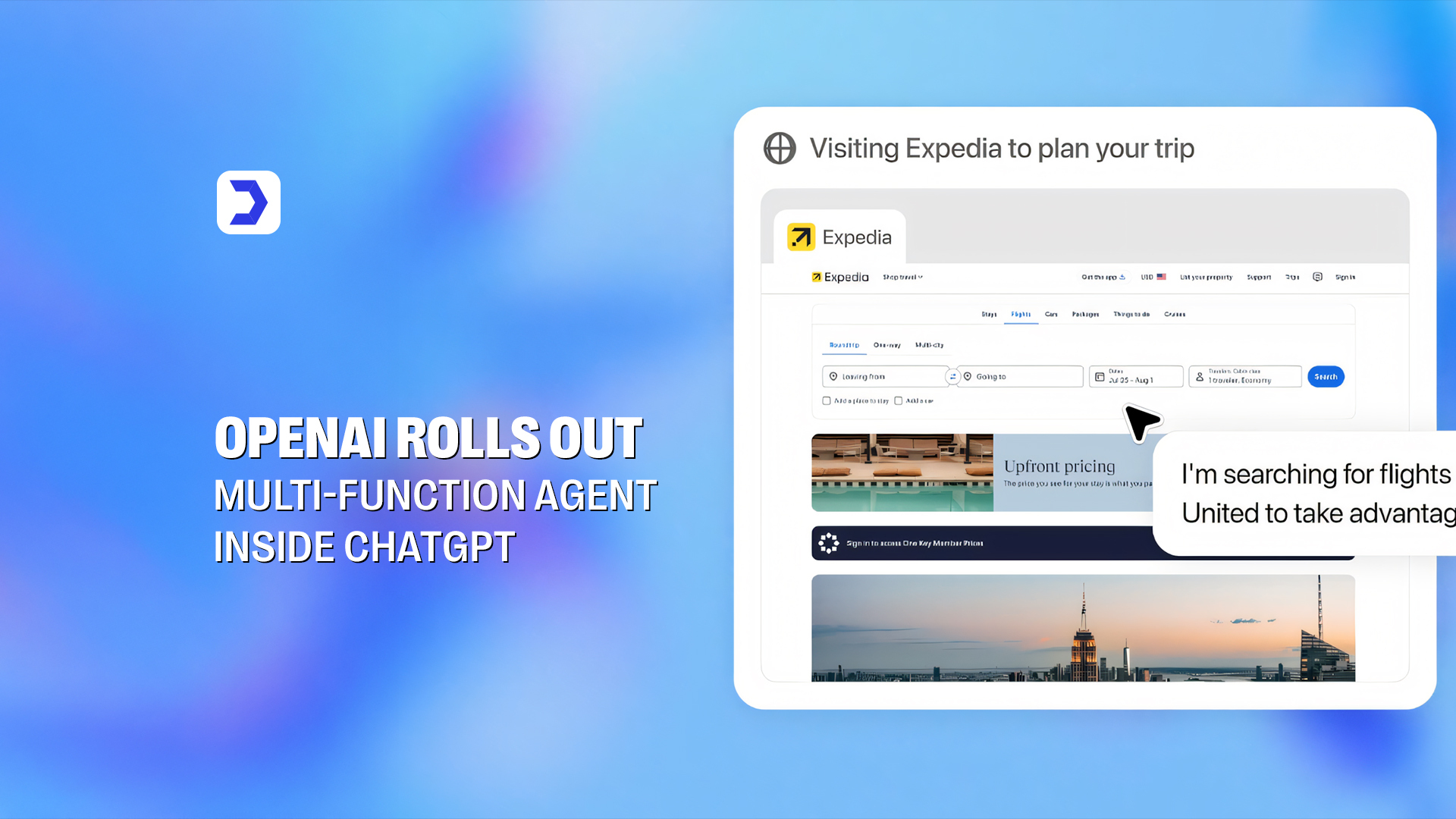

The technical integration of these models goes beyond simple API access. A critical component of the deal involves hardware; Anthropic has committed to using Amazon’s custom Trainium and Inferentia chips for training and deploying its models. Similarly, the partnership with OpenAI includes a massive commitment to utilize 2 gigawatts of Trainium capacity. This hardware-software synergy allows AWS to refine its silicon design based on the needs of the world’s most demanding AI workloads. While some projects face setbacks, such as when the ChatGPT adult mode launch was delayed by OpenAI once more, causing temporary friction in the user community, the underlying infrastructure partnership remains robust and long-term.

The focus will shift from simple content generation to “agentic” AI, systems that can actually perform tasks like processing insurance claims or managing supply chains autonomously. This transition requires the kind of massive, reliable computing power that only a few global giants can provide. For those following the latest in technology and news by Digital Software Labs, the message from San Francisco is clear: the winners of the AI era will be those who provide the infrastructure for everyone else to win. Amazon is betting $58 billion that it will be the one holding the keys to that infrastructure.