Summary

- OpenAI introduces open-source tools to improve teen safety across AI systems like ChatGPT

- New safety layers help ensure ChatGPT interactions remain age-appropriate and controlled for teen users

- Focus on teen safety includes content moderation, behavior monitoring, and adaptive AI responses

- OpenAI encourages developers to build safer AI environments using customizable open-source frameworks

- The initiative strengthens trust in ChatGPT while positioning OpenAI at the center of responsible AI development

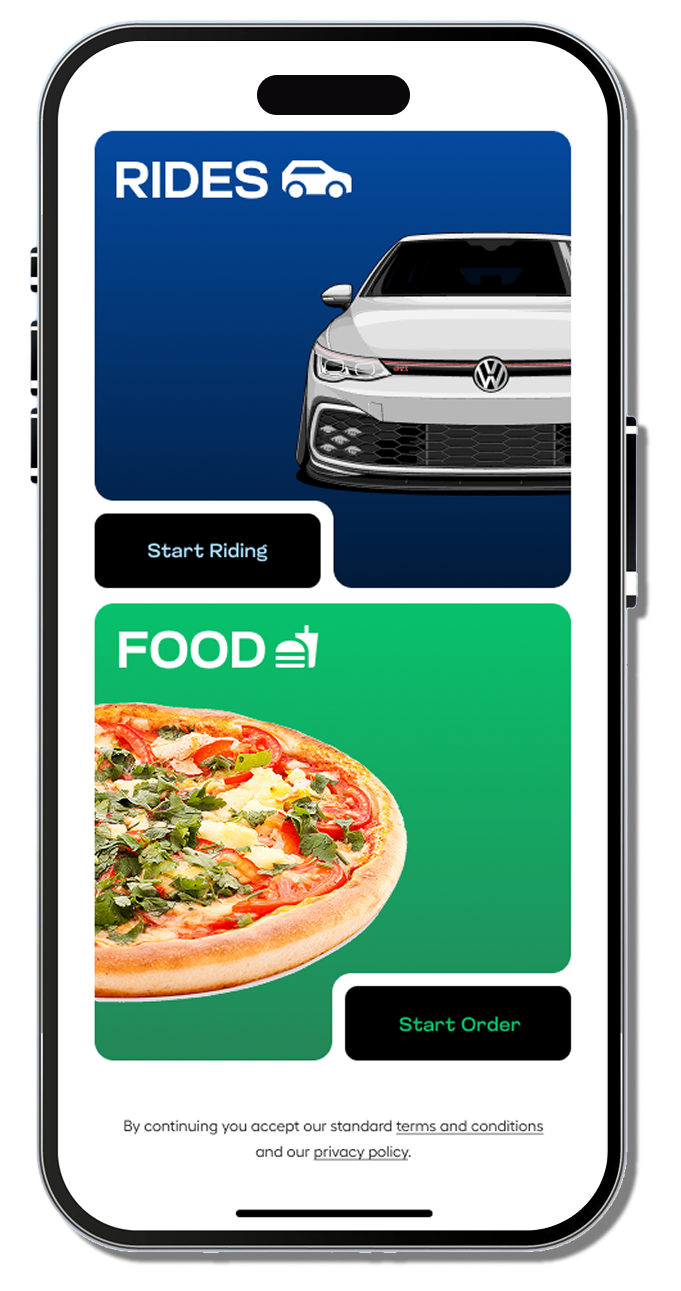

The conversation around digital safety is no longer limited to social media platforms or gaming environments. As AI tools become part of everyday life, especially for younger audiences, the responsibility to create safer digital spaces has grown significantly. With tools like ChatGPT being used for learning, communication, and even personal guidance, the expectations around safety, control, and accountability have shifted.

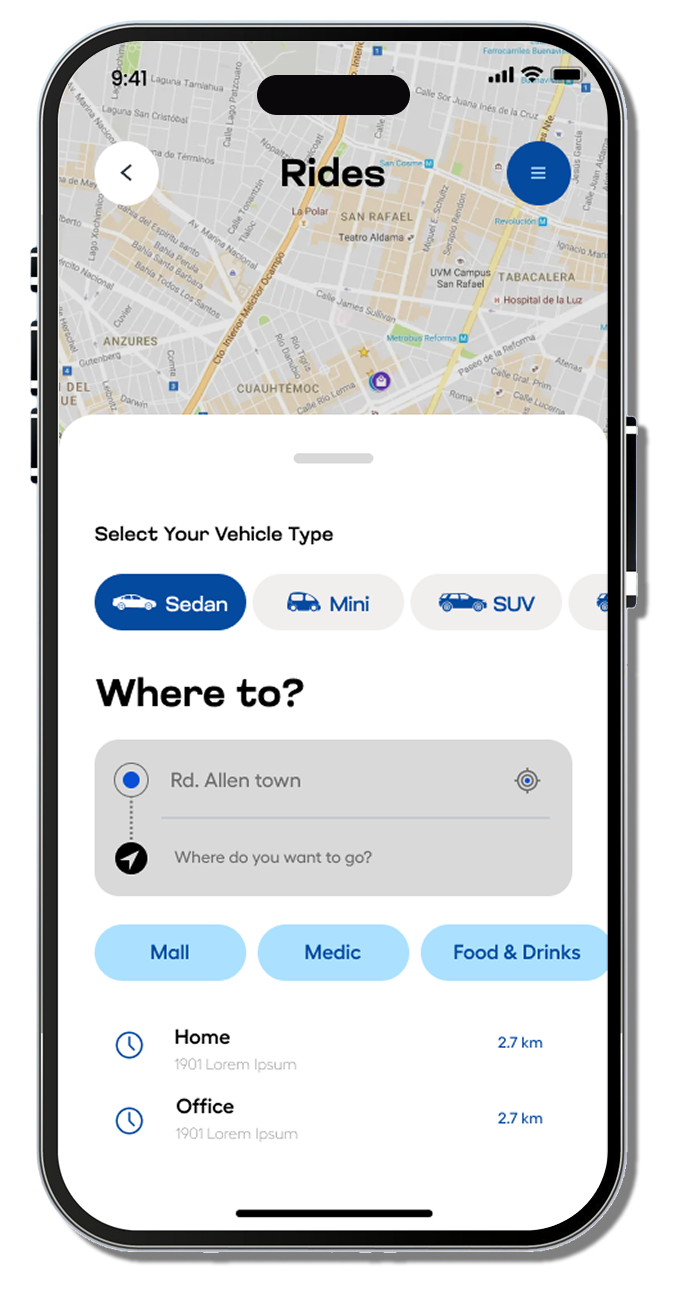

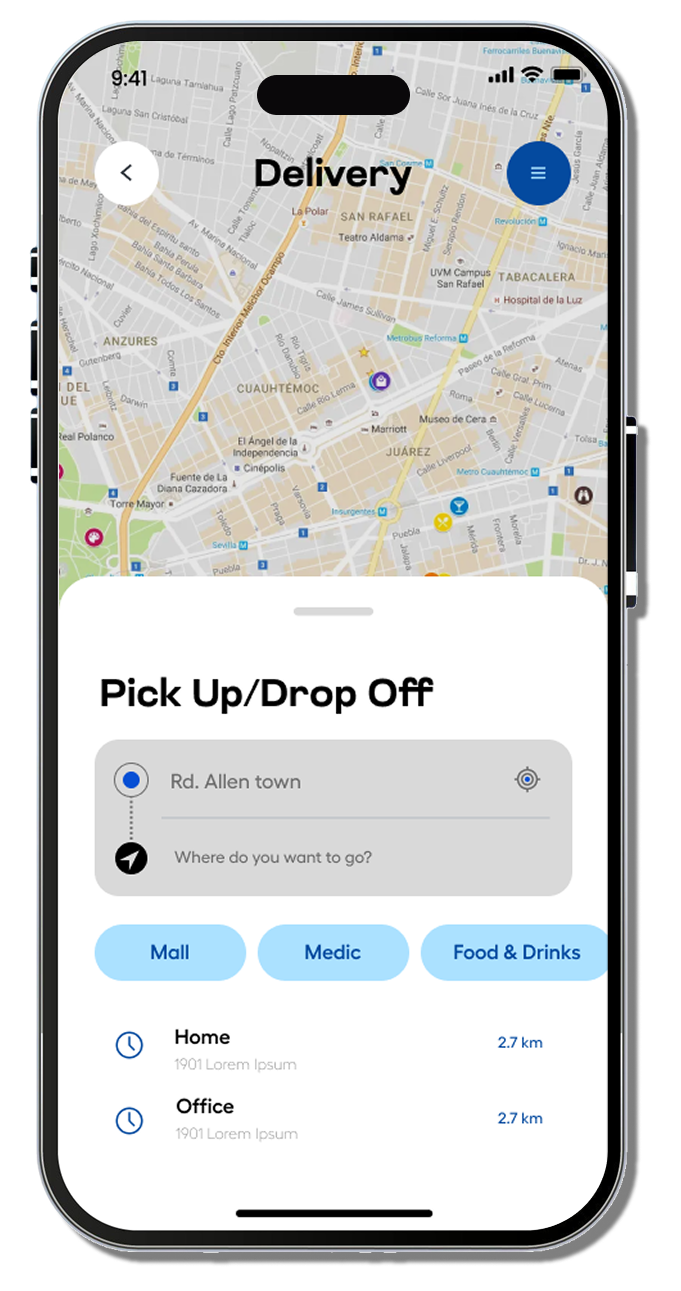

In response to this growing concern, OpenAI has introduced a set of open-source tools designed specifically to support safer experiences for teens. This move is not just a technical upgrade; it reflects a broader change in how AI systems are being developed and deployed for younger users. Instead of treating safety as an internal feature, OpenAI is making it a shared responsibility by giving developers and organizations the tools needed to implement safeguards directly within their platforms.

Teen safety is becoming one of the defining factors in how AI products are evaluated. The way these systems interact with younger users, filter content, and manage sensitive conversations will shape trust, adoption, and long-term usage. This initiative signals a clear direction where safety is no longer optional but central to AI development.

Another important aspect of this initiative is transparency. Open-source tools allow developers, educators, and safety experts to examine how these systems work and contribute to their improvement. This collaborative approach increases trust and ensures that safety measures evolve based on real-world feedback rather than remaining static.

The timing of this release also reflects the competitive nature of the AI industry. As companies push to introduce more advanced models, safety has become a critical point of differentiation. The discussion around AI is no longer just about speed or intelligence; it is about how these systems behave in sensitive situations and how they protect users, especially younger ones.

This shift can be seen in broader industry developments, where OpenAI continues to refine its approach to both performance and responsibility. In a recent update discussing model advancements and strategic positioning, the direction toward stronger safeguards and controlled deployments becomes evident within evolving AI releases, as reflected in this coverage on GPT-5.2 and OpenAI’s response to industry pressure, where performance improvements are closely tied to how safely these systems can operate at scale.

At the same time, ongoing coverage across the AI space continues to reflect how safety is becoming part of every major update and release. The broader narrative around AI development, including new tools, model updates, and policy changes, continues to evolve in parallel with these safety initiatives, a pattern that continues to surface across updates published in the Digital Software Labs news section, where each development adds another layer to how AI is being shaped for real-world use.