Summary

- The model removes unnecessary moralizing preambles, defensive caveats, and “calm down” prompts, providing more direct and efficient answers.

- Hallucination rates are reduced by approximately 27% for web-based queries and nearly 20% for internal knowledge, ensuring more reliable information.

- It better integrates search results with its own reasoning, preventing long lists of links and instead surfacing the most relevant information upfront.

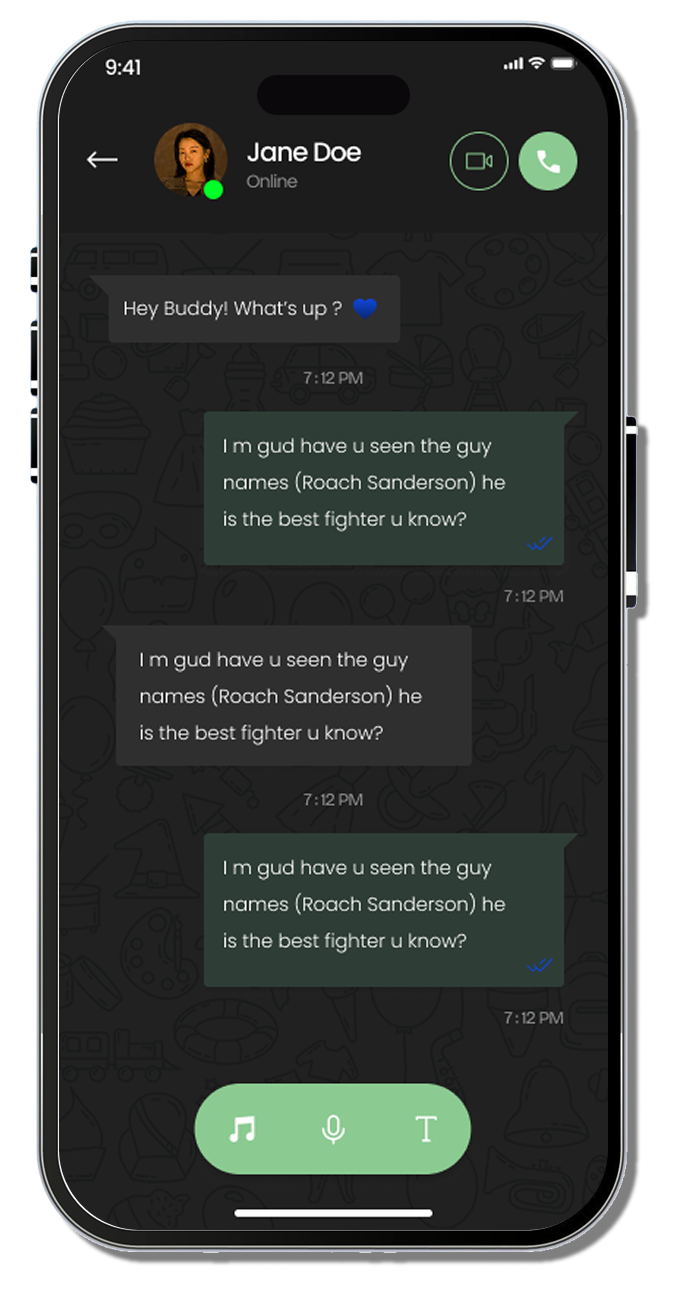

- The model is tuned to feel more natural and less robotic, focusing on relevant, contextual dialogue that doesn’t “beat around the bush.”

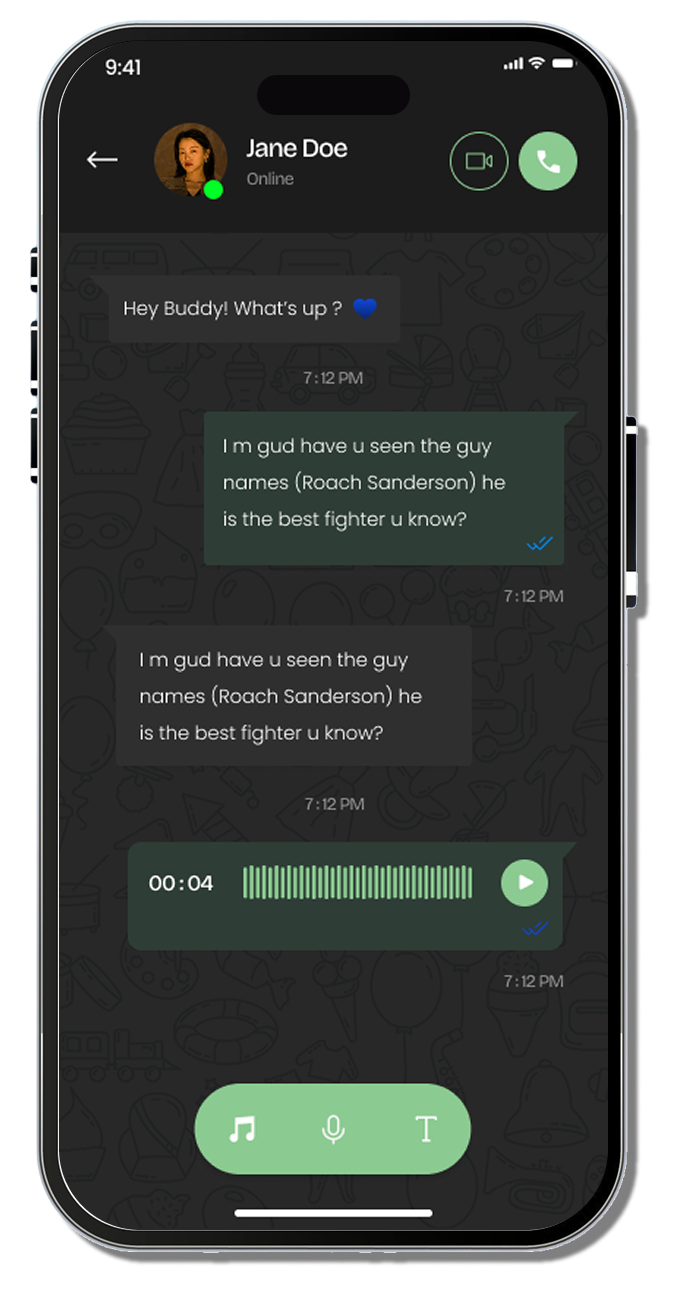

- Specifically engineered as a high-speed “workhorse” for everyday tasks like writing, coding, and research, prioritizing practical utility over purely academic benchmarks.

For millions of users who rely on OpenAI for daily productivity, the way we interact with these tools has undergone a significant shift. The release of the GPT-5.3 Instant model marks a departure from the overly cautious and sometimes moralizing tone that plagued its predecessors. Gone are the days when users felt like they were being lectured by a digital librarian; the new update effectively removes those frustrating “Take a breath” or “Stop” prompts, aiming to make ChatGPT a more direct, helpful, and less condescending assistant.

This evolution is not merely cosmetic. It reflects a bigger change in how OpenAI views the user relationship. By analyzing millions of interactions, the engineers identified that the “cringe” factor, those long-winded disclaimers and unnecessary refusals- was actually obstructing professional workflows. When users need to extract information from complex data or refine code, they don’t want a lecture on ethics or a lecture on how to phrase their query; they want results. The GPT-5.3 Instant model provides exactly that, cutting through the noise and delivering information that is both faster and more contextually accurate.

The necessity of this update becomes clear when you look at the broader competitive environment. The pressure on AI providers to deliver seamless, non-intrusive integration is at an all-time high. Users are no longer just comparing chatbots; they are evaluating entire ecosystems, especially as Google launches Android 16 with smarter alerts, controls, and UI upgrades. If an AI model forces a user to jump through hoops or apologize for asking a valid question, that user will inevitably drift toward an environment where the technology feels more intuitive and subservient to their goals.

This release also arrives in the shadow of rapid internal development cycles. The company realized that raw reasoning benchmarks were only half the battle. Usability, defined by speed, lack of friction, and conversational flow, is what actually drives adoption. Following the OpenAI response to Google with GPT-5.2 after the code red memo, it became clear that refining the interaction layer was paramount. While the tech community continues to track the development of ChatGPT 5 and its iterations, the practical reality is that most users interact with the “Instant” models more than any other tier. By optimizing the personality and responsiveness of this specific model, OpenAI is securing the foundational “habit” that makes ChatGPT the most widely used AI tool in the world.

GPT-5.3 personality

The most immediate change users will notice is the reduction in “over-caveating.” In previous versions, the model often assumed bad intent or moral ambiguity where none existed. If you asked a technical question about a sensitive topic, the old response might have been buried under three paragraphs of context-setting. The GPT-5.3 model is engineered to skip these moralizing preambles. It treats the user as a capable individual who needs information, not guidance.

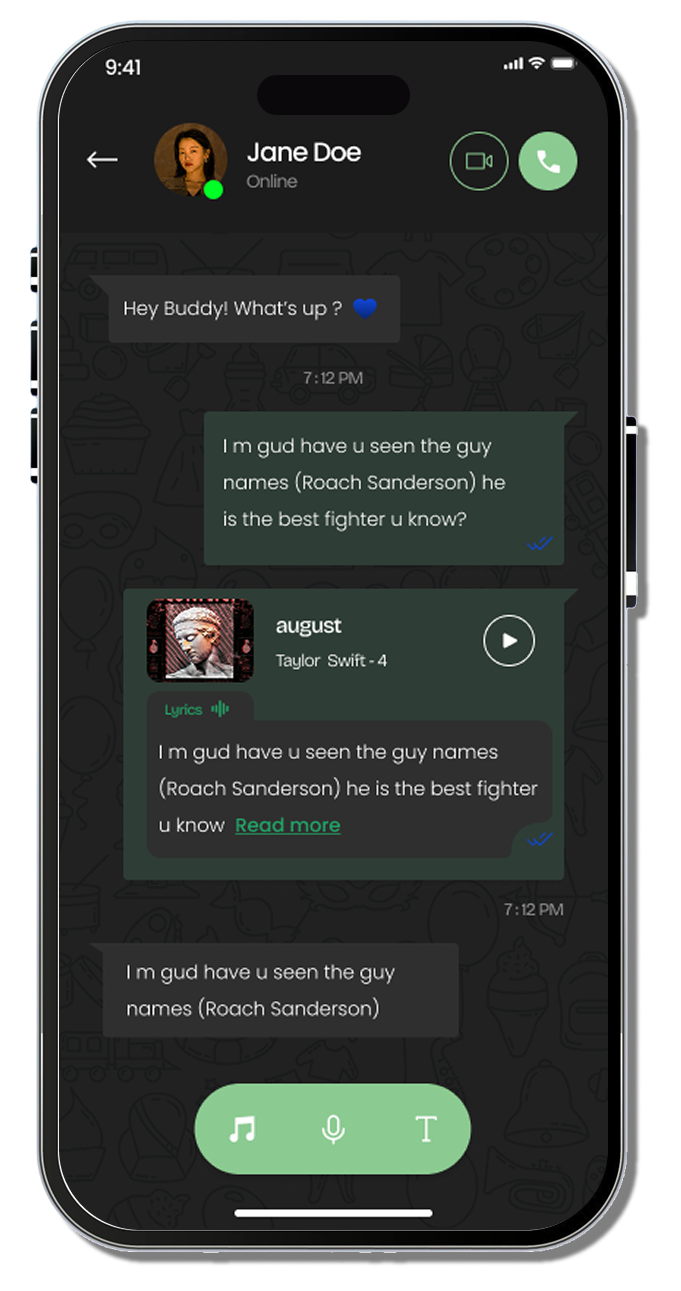

This shift in personality is also visible in the way the model uses web search. Instead of simply regurgitating lists of links or dumping summary snippets, the new model processes online information and synthesizes it into a single, usable answer. It is a smarter, more sophisticated way of handling research. For those managing Apps and digital workflows, this means less time spent manually verifying search results and more time using the output directly. The tone is warmer, more conversational, and, crucially, less defensive. It no longer tries to “calm you down”; it simply answers the question. For those who want to track how these personality shifts align with broader industry trends, our latest news updates by Digital Software Labs provide a comprehensive look at the evolution of these conversational interfaces.

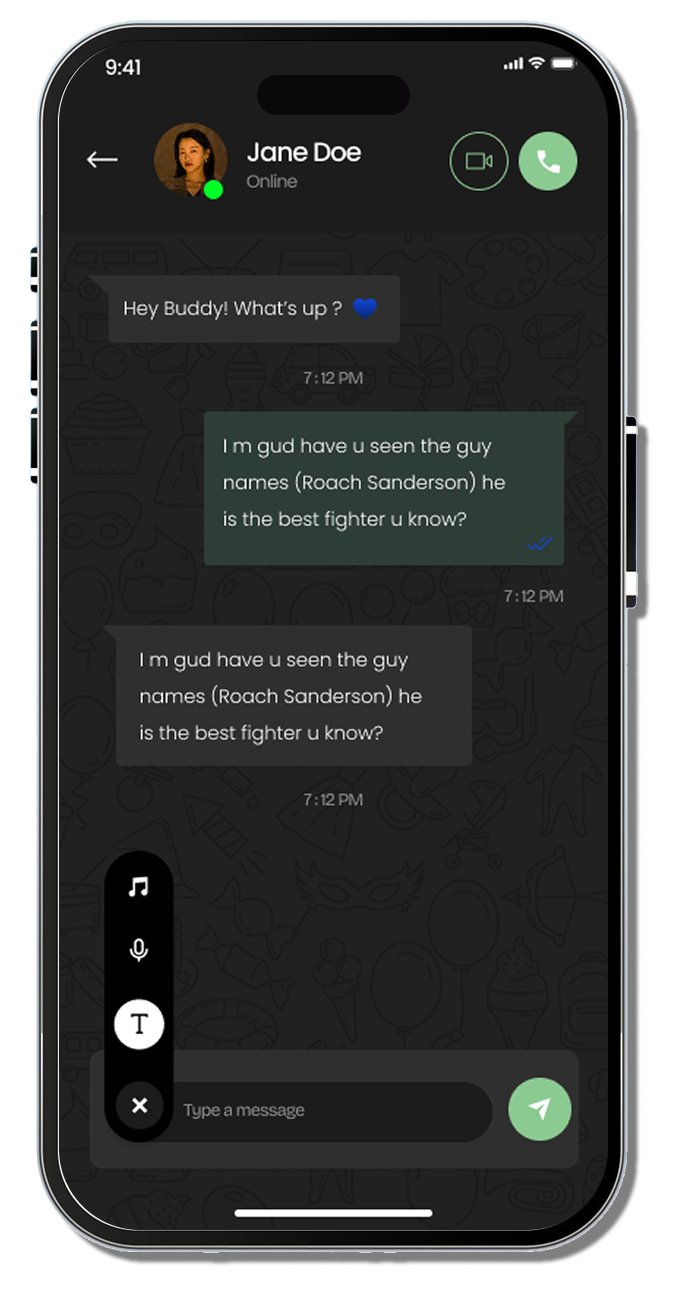

This refinement is part of a larger push to allow users to customize how their AI sounds. New personalization settings allow for toggling between “Efficient,” “Professional,” and “Candid” modes, ensuring that the model’s “personality” fits the user’s specific professional context. Whether you are debugging code, drafting legal summaries, or just brainstorming ideas for a creative project, the model adapts its linguistic style to minimize friction.