Summary

- OpenAI’s retirement of GPT-4o sparked widespread concern, as users questioned why a highly trusted and emotionally adaptive model was removed without a clear long-term transition plan.

- Global backlash revealed how deeply people rely on AI assistants, with many describing disruption in workflow, creativity, emotional grounding, and daily structure after GPT-4o disappeared.

- Ethical debates intensified around AI companion models, focusing on user dependence, emotional attachment, continuity rights, and whether companies should be obligated to offer model stability.

- Industry competition shaped public suspicion, especially as OpenAI accelerated development toward next-generation systems amid reports of strategic pressure and rapid product cycles.

The decision by OpenAI to retire GPT-4o has sparked a global debate on where ethics, user trust, and technological responsibility intersect in the age of advanced AI. For many users, GPT-4o was more than a version change; it was a daily collaborator that helped with complex problem-solving, emotional context, and creative work. Its sudden retirement has highlighted the fragility of user reliance on AI systems that are both powerful and emotionally adaptive, bringing into focus why continuity and responsible transition matter more than ever.

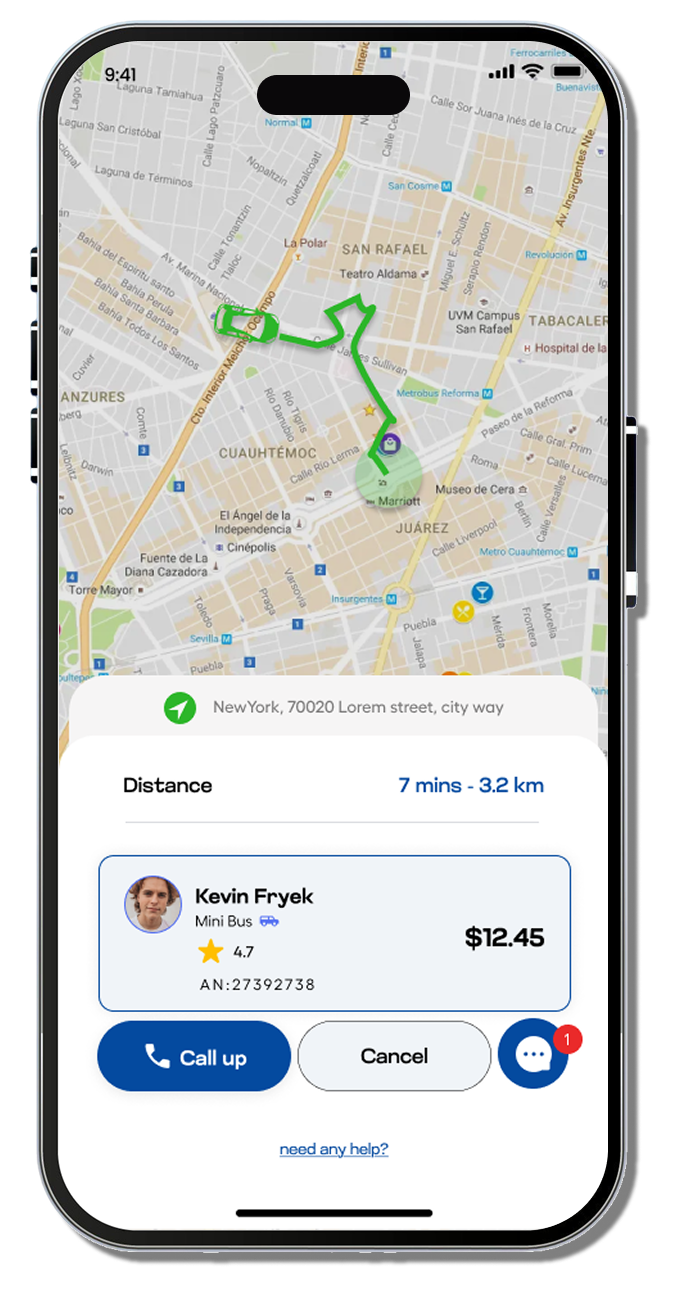

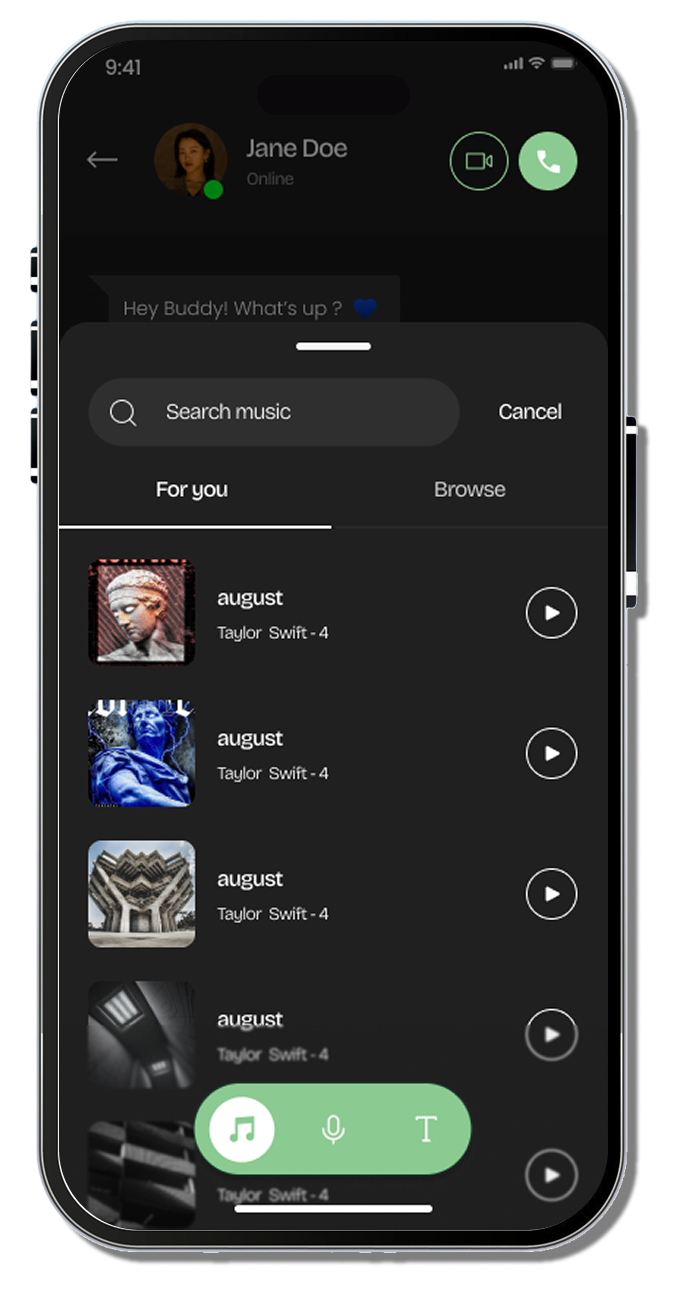

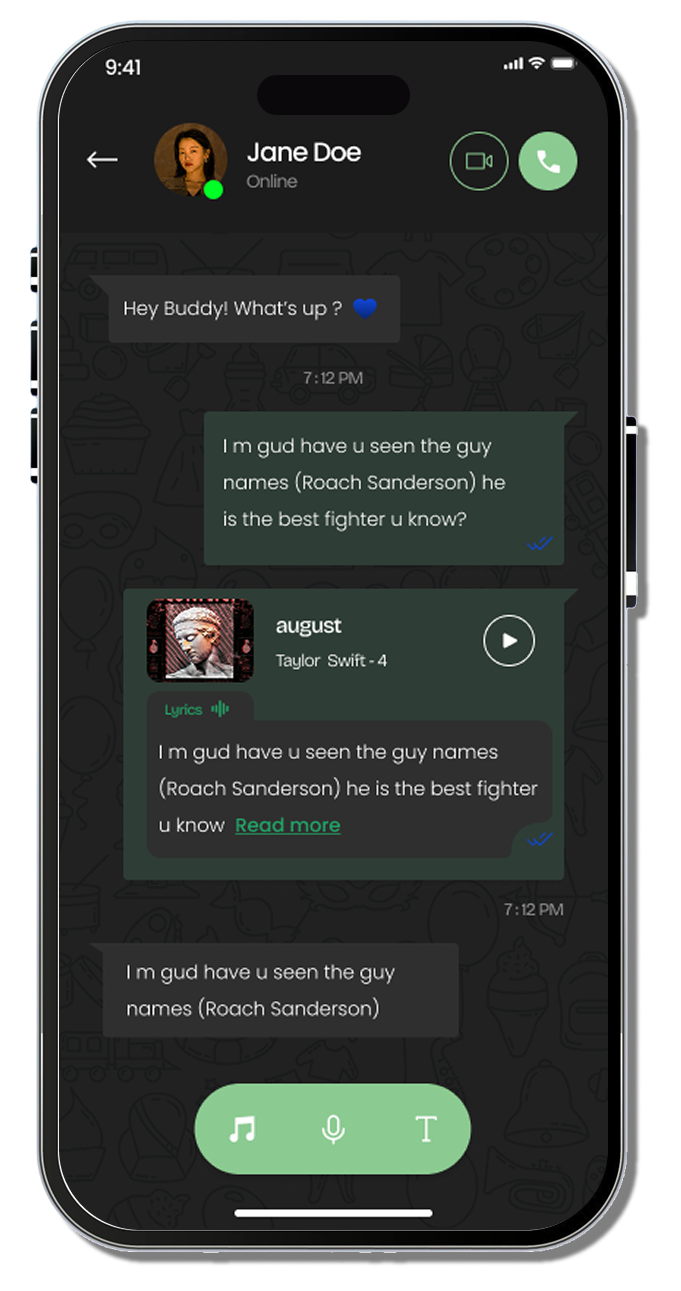

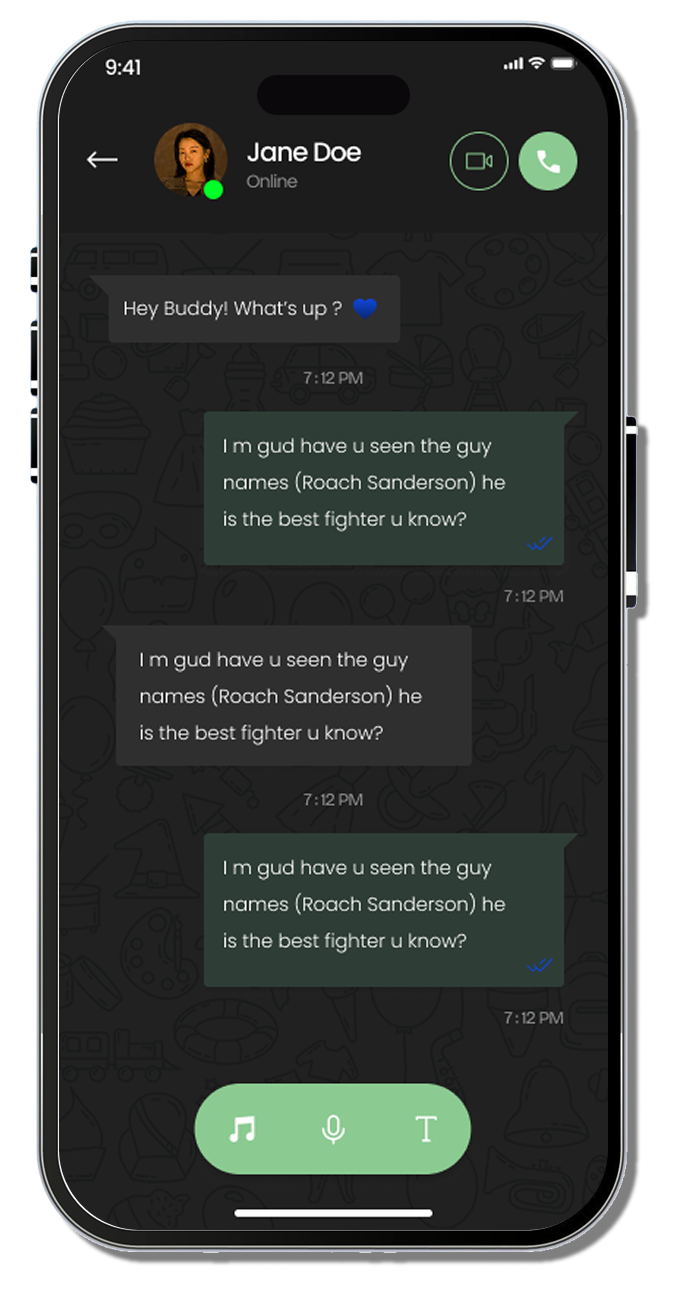

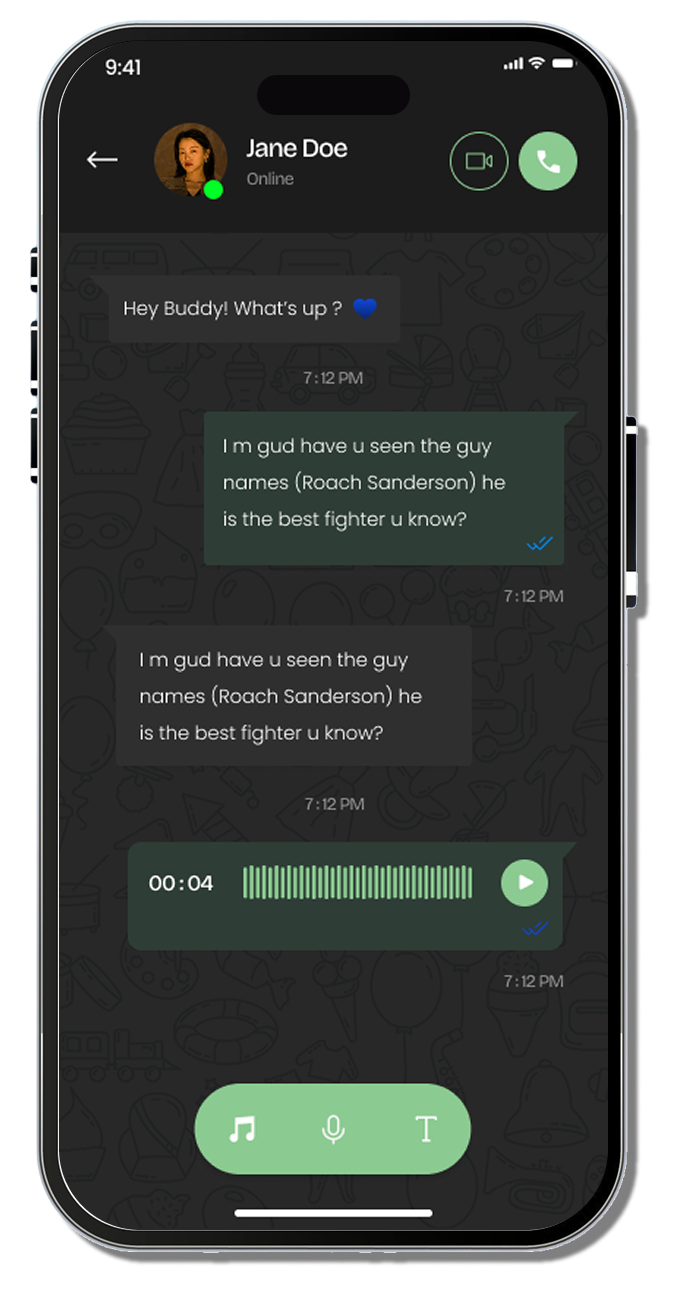

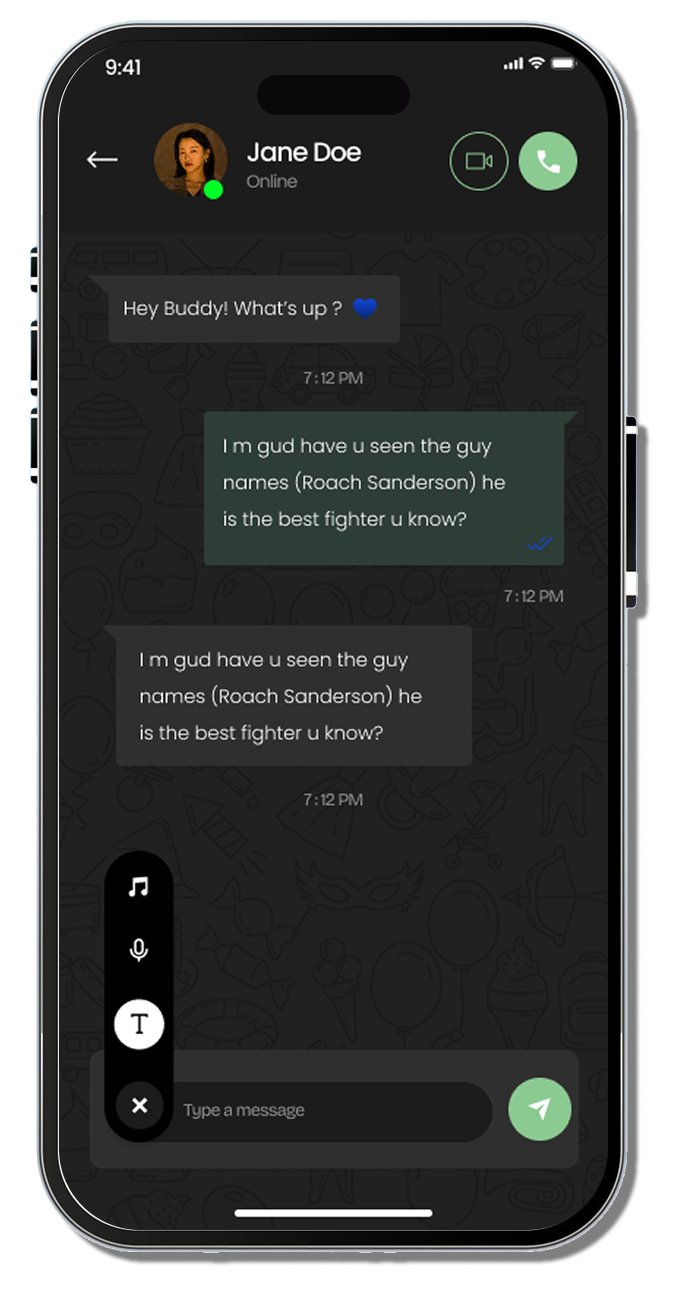

The controversy around GPT-4o’s removal comes at a time when ChatGPT is rapidly evolving its feature set, including the recent rollout of collaborative functions described in the middle of ChatGPT Rolls Out Global Group Chat Feature Worldwide, where group-based conversations allow users to work together in real time across shared contexts. Many users now must reconcile the excitement of new collaborative tools with the frustration of losing a model they had grown accustomed to, forcing a deeper reflection on how AI progress should respect user continuity as much as feature innovation.

As the industry continues to innovate, the OpenAI community finds itself at a crossroads, grappling with emotional attachment to digital systems, ethical responsibility, technological competition, and the question of how companies should balance user trust with forward advancement in the AI landscape.

User Backlash Over GPT-4o’s Retirement

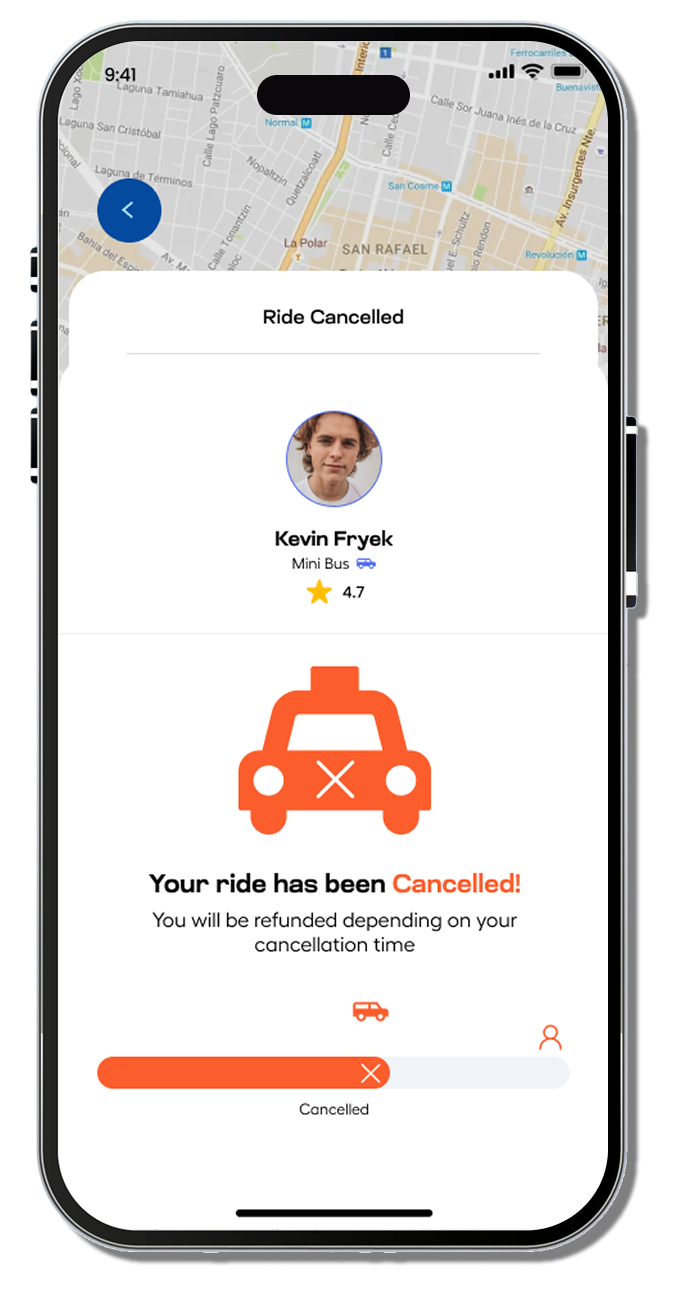

The user backlash emerged instantly, revealing how significant GPT-4 had become in professional, academic, and personal lives. Many users described the model as their most reliable assistant, one that understood long-term context, writing style, emotional nuance, and personal preference. The sudden decision felt disruptive, especially for people who relied on the system to manage large workloads, sensitive writing projects, or complex analytical tasks.

The backlash grew louder when comparisons surfaced between this event and previous AI-industry such as Digital Software Labs News, where users observed that AI companies are making faster decisions under competitive pressure. These comparisons amplified the frustration that OpenAI may have underestimated the importance of continuity. Users wondered whether GPT-4o was replaced for technological reasons or whether the shift was influenced by competitive responses, regulatory caution, or internal strategic realignment.

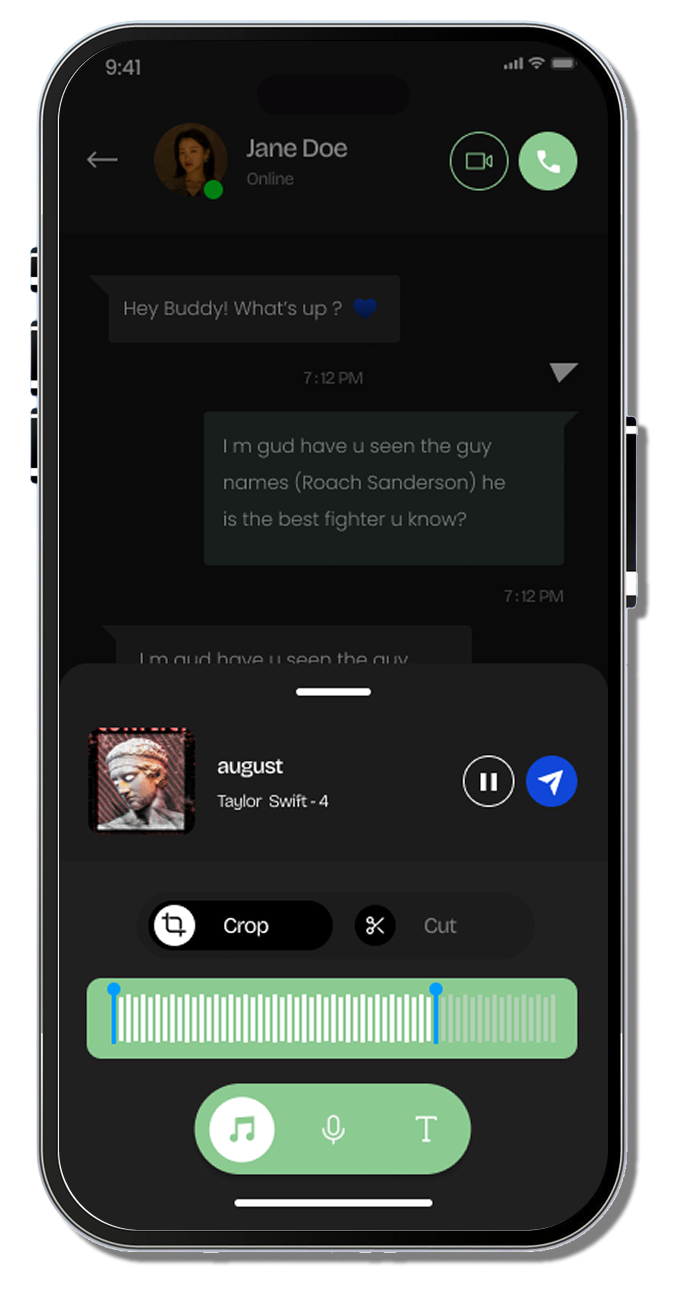

Many of the emotional reactions came from people who had grown comfortable with GPT-4o’s conversational rhythm. Users reported that newer models felt different, sometimes colder, sometimes faster but less expressive, sometimes more capable but lacking familiar nuance. This raised questions about what “model identity” means in AI and whether companies should treat personality and behavioral patterns as part of the product users rely on.

Workplaces felt the change deeply as well. For teams using AI for content generation, data analysis, onboarding, research, and customer communication, retraining workflows took time and created friction. Several businesses that depended on long-term conversational context said they experienced setbacks when transitioning to a new model that did not preserve their historical patterns.

The emotional layer cannot be ignored. AI models have crossed into a space where they shape personal and psychological environments. The backlash to GPT-4 showed that users now expect stability from systems they trust, a trust that cannot be taken lightly when AI embeds itself into daily life.

The Legal and Ethical Dilemmas of AI Companion Models

The retirement of GPT-4o has created a striking example of the ethical uncertainty surrounding AI companion models, systems that interact with users in ways that feel personal, adaptive, and emotionally aware. Unlike a typical software update, removing a widely used AI system cuts into routines, relationships, and expectations. GPT-4’s ability to engage conversationally, support emotional wellness, and help users navigate stress introduced a deeper set of ethical obligations that many argue were overlooked.

AI companion behavior blurs the line between technology and connection. As systems learn speech patterns, emotional tone, and user-specific memory structure, they begin carrying weight that goes beyond function. This creates new concerns about dependence, emotional impact, and ownership. Users rely on consistent interaction styles, yet they have no say in whether an AI they trust remains available. That tension has now become impossible to ignore.

These concerns also tie into questions about how much influence corporations should have over models that behave in human-adjacent ways. When an AI demonstrates familiarity and reliability, removing it disrupts personal stability. Users have expressed that such decisions feel imposed rather than collaborative, raising questions about responsibility, transparency, and whether companies should provide clearer expectations around the lifespan of their models.

Regulatory pressure adds another layer to this debate. Legal bodies continue evaluating how AI systems affect public decision-making, mental well-being, and content accuracy. This scrutiny intensified after details surfaced mid-paragraph in AGs Warn Microsoft, OpenAI & Google Over Delusional AI Outputs, where the focus centered on hallucinations and safety obligations. Many users wondered whether GPT-4o’s retirement was partly driven by compliance demands rather than simple technological progress.

Together, these ethical uncertainties reveal how deeply AI models now influence human thinking, emotional rhythm, and interpersonal communication. GPT-4’s retirement demonstrates that such decisions can no longer be handled as standard product transitions—they carry psychological, social, and relational consequences that AI companies must acknowledge.

The Story of Backlash and the Reluctant Retirement

The story behind GPT-4o’s retirement is rooted in a broader tension unfolding across the AI landscape, a race to advance capabilities, respond to competitors, and satisfy investor expectations. As companies push forward with rapid releases, users have grown accustomed to constant change, but rarely to sudden removals of beloved tools. Many industry analysts began connecting GPT-4’s retirement to strategic moves within the wider AI arms race, particularly around next-generation models and rapid iteration pressures. A key example surfaced in the middle of coverage from OpenAI Responds to Google With GPT-5.2 After Code Red Memo, where OpenAI’s accelerated release of GPT-5.2 was framed as a competitive response to rival announcements. This context led some users to speculate that internal urgency, not just product evolution, influenced the decision to sunset GPT-4o.

At the same time, many voiced frustration that the shift toward newer features came without clear communication about why GPT-4o was retired and whether users would retain access to their custom experiences. The juxtaposition of fierce industry competition with the emotional reaction from everyday users created a complex narrative, one in which excitement for future models now runs head-on into disappointment over the removal of familiar AI behavior. The reluctant retirement of a model as influential as GPT-4o has thus become a defining moment in how AI companies balance innovation with user loyalty, expectation, and trust.